1. Introduction to Google Cloud Anthos

Google Cloud Anthos is a modern application management platform designed to help organizations build, deploy, and manage applications consistently across on-premises environments, Google Cloud, and other public clouds such as AWS and Azure.

Anthos was introduced to address one of the biggest challenges in enterprise IT: operating applications across heterogeneous environments without fragmentation. Traditional cloud migration often leads to vendor lock-in, inconsistent security policies, and operational complexity. Anthos solves this by providing a single control plane for infrastructure, applications, and services.

At its core, Anthos is built on Kubernetes, enabling containerized workloads to run anywhere with uniform governance, security, and observability.

2. Why Anthos Was Created

Enterprise Challenges Before Anthos

- Legacy on-premises applications difficult to modernize

- Inconsistent tooling across clouds

- Vendor lock-in concerns

- Security and compliance gaps

- Operational overhead managing multiple platforms

Anthos Objectives

- Enable hybrid and multi-cloud strategies

- Provide application portability

- Centralize policy enforcement and security

- Reduce operational complexity

- Support gradual cloud modernization

Anthos allows enterprises to modernize at their own pace without rewriting applications or abandoning existing infrastructure.

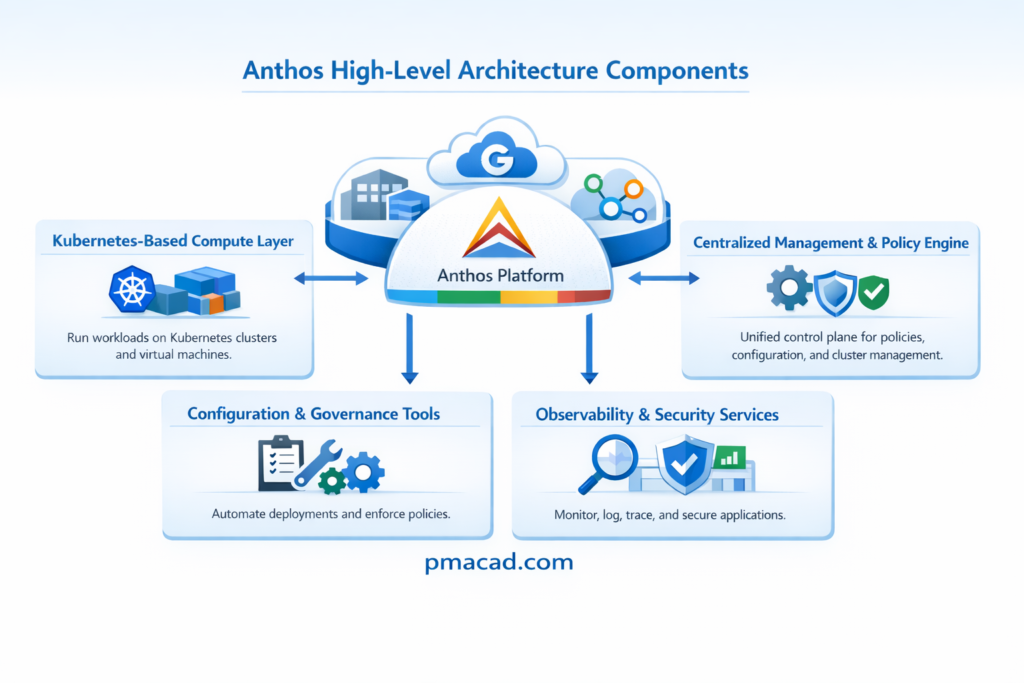

3. Core Architecture of Anthos

Anthos is not a single product, but a platform composed of multiple integrated services.

High-Level Architecture Components

- Kubernetes-based compute layer

- Centralized management and policy engine

- Service mesh for microservices

- Configuration and governance tools

- Observability and security services

Anthos provides a control plane in Google Cloud that manages workloads running across different environments.

4. Anthos Kubernetes Foundation

Kubernetes as the Backbone

Kubernetes serves as the foundational backbone of Google Cloud Anthos, providing a consistent, open, and portable platform for running containerized applications across environments.

Anthos is built on upstream Kubernetes, ensuring full compatibility with open-source standards and preventing vendor lock-in. By standardizing on Kubernetes, Anthos enables applications to run seamlessly on Google Cloud, on-premises data centers, and other public clouds using the same APIs, tools, and operational models.

Kubernetes handles container orchestration tasks such as scheduling, scaling, self-healing, and service discovery. This uniform Kubernetes layer allows Anthos to deliver consistent deployment, security, and management experiences across hybrid and multi-cloud infrastructures.

Anthos is built on upstream Kubernetes, ensuring:

- No proprietary lock-in

- Open-source compatibility

- Portability across environments

Key Kubernetes Offerings in Anthos

a. Google Kubernetes Engine (GKE)

Google Kubernetes Engine (GKE) is a fully managed Kubernetes service that runs on Google Cloud and forms the core runtime environment for Anthos. It handles critical operational tasks such as cluster provisioning, automated upgrades, patch management, and node scaling. GKE integrates deeply with Google Cloud security services, identity management, logging, and monitoring. By abstracting infrastructure complexity, GKE allows teams to focus on application development while benefiting from high availability, reliability, and performance at scale.

b. Anthos Clusters on VMware

Anthos Clusters on VMware enables organizations to run Kubernetes directly on their existing VMware-based on-premises infrastructure. This offering is ideal for enterprises with large data centers that want to modernize applications without migrating immediately to the cloud. It supports running containerized workloads using the same Kubernetes APIs and tools as in Google Cloud. Applications do not need to be refactored, allowing a smooth transition to cloud-native architectures while preserving existing investments.

c. Anthos on Bare Metal

Anthos on Bare Metal allows Kubernetes clusters to run directly on physical servers without a hypervisor. This approach reduces virtualization overhead and delivers improved performance, making it suitable for latency-sensitive and high-throughput workloads. It is commonly used in edge environments, telecom networks, and manufacturing facilities where real-time processing is required. Anthos on Bare Metal provides centralized management, security, and policy enforcement while supporting specialized hardware and disconnected or constrained environments.

d. Anthos on Other Clouds

Anthos on other clouds extends Anthos capabilities to Kubernetes clusters running on public cloud platforms such as AWS and Azure. It enables organizations to manage multi-cloud environments using a single control plane hosted in Google Cloud. This offering ensures consistent configuration, security policies, and observability across clouds. By supporting Kubernetes clusters outside Google Cloud, Anthos helps enterprises avoid vendor lock-in, improve resilience, and implement true multi-cloud application strategies

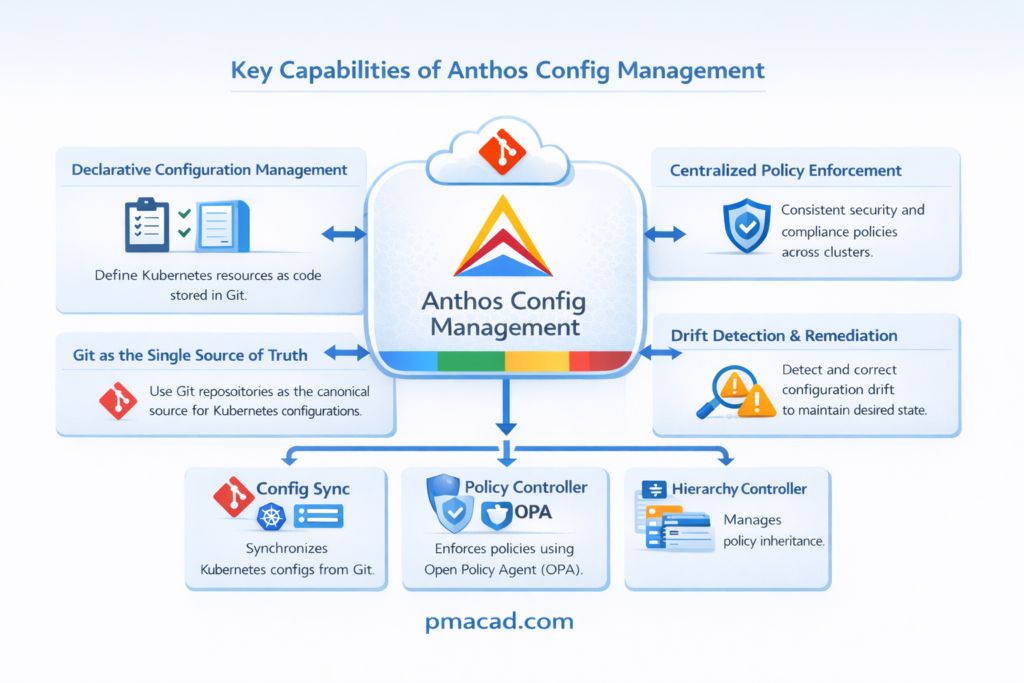

5. Anthos Config Management

Anthos Config Management (ACM) provides policy-driven governance using a GitOps approach.

Anthos Config Management is a governance and configuration service that enables organizations to manage Kubernetes configurations and policies consistently across multiple clusters and environments. It follows a GitOps-based model, where configuration files stored in a Git repository act as the single source of truth.

Anthos Config Management automatically applies, enforces, and monitors these configurations across all registered clusters. It helps detect configuration drift, prevent policy violations, and ensure compliance with organizational standards. By using declarative configuration and automated enforcement, Anthos Config Management improves security, simplifies audits, and reduces operational errors in hybrid and multi-cloud Kubernetes deployments.

Key Capabilities of ACM

Declarative Configuration Management

Declarative configuration management defines the desired state of infrastructure and applications rather than specifying step-by-step instructions. Systems continuously compare the actual state with the declared state and automatically make corrections, ensuring consistency, repeatability, and reduced manual intervention across environments.

Centralized Policy Enforcement

Centralized policy enforcement ensures that security, compliance, and operational policies are applied uniformly across all clusters and environments. Policies are defined once and enforced everywhere, preventing misconfigurations, reducing security risks, and simplifying governance in hybrid and multi-cloud deployments.

Drift Detection and Remediation

Drift detection identifies differences between the desired configuration stored in source control and the actual running configuration. When drift occurs due to manual changes or failures, automated remediation restores the system to the approved state, maintaining reliability and compliance.

Git as the Single Source of Truth

Using Git as the single source of truth means all configurations, policies, and infrastructure definitions are stored and versioned in Git repositories. This enables traceability, change history, collaboration, easy rollback, and automated auditing across distributed environments.

Components of ACM

- Config Sync: Synchronizes Kubernetes configs from Git

- Policy Controller: Enforces policies using Open Policy Agent (OPA)

- Hierarchy Controller: Manages policy inheritance

Benefits of ACM

- Consistent configurations across clusters

- Improved security posture

- Faster audits and compliance validation

6. Anthos Service Mesh

Anthos Service Mesh (ASM) is based on Istio, providing advanced networking for microservices.

Anthos Service Mesh is a fully managed service mesh built on the open-source Istio project that provides secure, observable, and reliable communication between microservices. It abstracts service-to-service networking into a dedicated infrastructure layer without requiring changes to application code.

Anthos Service Mesh enables features such as mutual TLS for encrypted communication, intelligent traffic management, load balancing, retries, and fault injection. It also delivers deep observability through metrics, logs, and traces, helping teams understand service behavior.

By standardizing networking and security across hybrid and multi-cloud environments, Anthos Service Mesh simplifies microservices operations and improves application reliability and security.

What is a Service Mesh?

A service mesh manages service-to-service communication without requiring application code changes. A service mesh is a dedicated infrastructure layer that manages communication between microservices in a distributed application. Instead of embedding networking logic inside application code, a service mesh handles service discovery, load balancing, traffic routing, security, and observability transparently. It typically works by deploying lightweight proxy sidecars alongside each service, intercepting all inbound and outbound traffic. This allows features such as encrypted communication, retries, circuit breaking, and detailed monitoring without modifying applications. Service meshes are especially useful in large microservices architectures, where managing service-to-service communication, security, and reliability becomes complex as the number of services grows.

Core Features

- Mutual TLS (mTLS): Mutual TLS encrypts service-to-service communication and verifies both client and server identities, ensuring secure and trusted internal connections.

- Traffic management: Traffic management controls how requests are routed between services, enabling canary releases, blue-green deployments, and version-based traffic splitting.

- Load balancing: Load balancing distributes incoming requests across multiple service instances to improve performance, availability, and prevent overloading individual services.

- Observability: Observability provides visibility into system behavior using metrics, logs, and traces, helping teams monitor performance and troubleshoot issues effectively.

- Fault injection and retries: Fault injection simulates failures, while retries automatically reattempt failed requests, improving system resilience and testing application reliability.

Key Advantages

- Secure service communication by default

- Fine-grained traffic control (canary deployments, blue-green deployments)

- Deep visibility into microservice behavior

Anthos Service Mesh is essential for enterprises adopting microservices architectures.

7. Security in Anthos

Security is a foundational pillar of Anthos, not an afterthought.

Built-in Security Features

- Identity-based access using IAM: Identity and Access Management (IAM) controls who can access resources based on authenticated identities rather than network location. Permissions are granted through roles, ensuring least-privilege access. IAM integrates with Kubernetes and cloud services to manage users, workloads, and service accounts securely and consistently across environments.

- mTLS for internal traffic: Mutual TLS secures internal service-to-service communication by encrypting traffic and verifying both client and server identities. This prevents unauthorized services from communicating within the cluster and protects data from interception, spoofing, and man-in-the-middle attacks in microservices architectures.

- Policy-based controls : Policy-based controls enforce organizational rules and compliance requirements automatically. These policies restrict configurations, deployments, and access patterns, preventing insecure or non-compliant resources from being created. Policy enforcement ensures consistency, reduces human error, and strengthens security across hybrid and multi-cloud environments.

- Secure software supply chain : A secure software supply chain ensures that only trusted, verified, and approved code is built, tested, and deployed. It includes image signing, vulnerability scanning, and deployment validation, reducing risks from compromised code, malicious dependencies, or unauthorized container images.

Anthos Security Tools

a. Binary Authorization

- Ensures only trusted container images are deployed

- Enforces image signing and verification

b. Policy Controller

- Enforces compliance policies across clusters

- Prevents misconfigurations before deployment

c. Workload Identity

- Secure identity mapping between Kubernetes and cloud services

- Eliminates long-lived service account keys

Compliance Support

Anthos supports:

- GDPR (General Data Protection Regulation): GDPR is a European Union regulation that governs how personal data of individuals is collected, processed, stored, and transferred. It emphasizes data privacy, user consent, transparency, breach notification, and strong security controls for protecting personal information.

- HIPAA(Health Insurance Portability and Accountability Act): HIPAA is a U.S. healthcare regulation that protects electronic protected health information (ePHI). It requires strict administrative, technical, and physical safeguards to ensure confidentiality, integrity, and availability of sensitive patient data.

- ISO 27001: ISO 27001 is an international standard for establishing and maintaining an information security management system (ISMS). It focuses on risk management, security controls, continuous improvement, and systematic protection of information assets across organizations.

- SOC 1 / SOC 2 / SOC 3: SOC reports assess internal controls within an organization. SOC 1 focuses on financial reporting controls, SOC 2 evaluates security, availability, confidentiality, and privacy, while SOC 3 provides a public summary of SOC 2 assurance.

8. Observability and Monitoring

Anthos integrates deeply with Google Cloud’s observability stack.

Observability Components

- Metrics: Metrics are numerical time-series data that represent the health and performance of systems, such as CPU usage, memory consumption, request rates, and latency. In Anthos, metrics help teams track trends, evaluate service-level objectives, and proactively manage capacity and reliability.

- Logs: Logs are detailed, time-stamped records of events generated by applications, containers, and infrastructure. Anthos aggregates logs from all environments into a centralized system, enabling efficient debugging, security analysis, auditing, and root-cause investigation across distributed workloads.

- Traces: Traces follow a request as it flows through multiple services, showing end-to-end latency and dependencies. In microservices architectures, traces help identify performance bottlenecks, service failures, and inefficient communication paths, improving application optimization and reliability.

- Alerts: Alerts notify teams when predefined thresholds or abnormal conditions are detected in metrics or logs. Anthos alerting enables rapid response to incidents, reduces downtime, and supports proactive operations by warning teams before issues impact users.

Tools Used

- Cloud Monitoring: Cloud Monitoring collects, visualizes, and analyzes metrics from applications, containers, and infrastructure. In Anthos environments, it provides dashboards, alerts, and uptime checks across hybrid and multi-cloud clusters, helping teams track performance, detect anomalies, and maintain service reliability.

- Cloud Logging: Cloud Logging centralizes logs from applications, Kubernetes workloads, and infrastructure components. It enables powerful search, filtering, and retention of logs across all Anthos clusters, supporting debugging, security analysis, compliance audits, and operational troubleshooting in distributed environments.

- Cloud Trace: Cloud Trace analyzes distributed traces to measure latency and performance of microservices. It helps identify bottlenecks, slow requests, and dependency issues across services, enabling developers and SREs to optimize application performance in complex, service-based architectures.

- Managed Prometheus: Managed Prometheus is a fully managed, scalable monitoring solution for collecting Prometheus-compatible metrics. It integrates natively with Kubernetes and Anthos, eliminating operational overhead while providing reliable, long-term metric storage and powerful querying for observability and alerting.

Benefits

- Unified visibility across all environments

- Faster troubleshooting

- Proactive issue detection

- SRE-friendly monitoring model

9. Anthos and Application Modernization

Anthos is a key enabler of cloud-native modernization.

Anthos plays a critical role in application modernization by enabling organizations to transform legacy and monolithic applications into cloud-native architectures at their own pace. It supports multiple modernization strategies, including lift-and-shift, replatforming, and refactoring into microservices. By providing a consistent Kubernetes-based platform across on-premises, cloud, and multi-cloud environments, Anthos reduces migration risk and complexity.

Development teams can modernize applications incrementally while maintaining existing systems. Anthos also integrates with CI/CD pipelines, service mesh, and GitOps workflows, accelerating innovation, improving scalability, and enhancing application reliability without forcing disruptive, all-at-once migrations.

Modernization Approaches Supported

- Rehost (lift-and-shift)

- Replatform

- Refactor into microservices

- Hybrid application architectures

Supporting Technologies

- Containers

- Kubernetes

- CI/CD pipelines

- Service mesh

- GitOps workflows

Anthos allows enterprises to modernize incrementally rather than through risky “big bang” migrations.

10. Hybrid and Multi-Cloud Use Cases

Hybrid Cloud Use Cases

Gradual Cloud Migration

Gradual cloud migration allows organizations to move applications to the cloud in phases rather than all at once. Anthos enables workloads to run consistently on-premises and in the cloud, reducing migration risk, avoiding downtime, and allowing teams to modernize applications incrementally.

Regulatory Data Residency

Some industries require data to remain in specific geographic locations due to legal or regulatory requirements. Anthos supports regulatory data residency by allowing sensitive data to stay on-premises or in approved regions while still integrating with cloud-based services and centralized management.

Latency-Sensitive Workloads

Latency-sensitive workloads require data processing close to users or devices to ensure fast response times. Anthos enables applications to run at the edge or on-premises while maintaining centralized control, making it suitable for real-time systems such as manufacturing, retail, and telecom.

Disaster Recovery

Anthos supports disaster recovery by enabling workloads to run across multiple environments and regions. Applications can be replicated between on-premises and cloud environments, allowing rapid failover, improved availability, and reduced downtime during outages or system failures.

Multi-Cloud Use Cases

Avoid Vendor Lock-In

Vendor lock-in occurs when applications become tightly coupled to a single cloud provider. Anthos uses open standards and Kubernetes to ensure application portability, allowing organizations to deploy workloads across multiple cloud platforms without rewriting applications or changing operational tools.

Improve Resilience

Multi-cloud architectures improve resilience by distributing workloads across different cloud providers. Anthos enables centralized management of these environments, reducing the impact of outages and improving availability by allowing workloads to fail over between clouds when necessary.

Geographic Expansion

Organizations expanding into new regions can use multi-cloud strategies to deploy applications closer to customers. Anthos supports consistent application deployment across cloud providers, helping businesses meet performance, compliance, and availability requirements in different geographic markets.

Mergers and Acquisitions

During mergers and acquisitions, organizations often inherit different IT platforms and cloud providers. Anthos simplifies integration by providing a unified Kubernetes management layer, allowing teams to manage diverse environments consistently while maintaining operational stability during transitions.

Anthos provides consistent tooling across all environments, reducing operational silos.

11. Anthos for Edge Computing

Anthos for Edge Computing enables organizations to deploy, manage, and operate applications closer to users, devices, and data sources. Using Anthos on Bare Metal, enterprises can run Kubernetes clusters on physical servers in edge locations such as retail stores, factories, telecom sites, and branch offices. This reduces latency, improves performance, and supports real-time processing even in environments with limited or unreliable connectivity.

Anthos provides centralized management, security policies, and observability for thousands of distributed edge locations. By extending cloud-native capabilities to the edge, Anthos helps organizations build resilient, scalable, and secure edge applications while maintaining operational consistency across all environments.

Anthos supports edge deployments using:

- Anthos on Bare Metal

- Lightweight Kubernetes clusters

Edge Use Cases

- Retail stores: In retail stores, edge computing enables real-time inventory tracking, point-of-sale processing, personalized customer experiences, and video analytics. Anthos allows applications to run locally in stores while being centrally managed, ensuring low latency, consistent updates, and reliable operations across hundreds or thousands of locations.

- Telecom networks: Telecom networks require ultra-low latency and high reliability for services such as 5G core functions, network slicing, and real-time traffic management. Anthos supports distributed Kubernetes deployments at the network edge, enabling telecom providers to deploy, scale, and manage services efficiently across geographically dispersed sites.

- Manufacturing plants: Manufacturing plants rely on real-time data processing for automation, quality control, and predictive maintenance. Anthos enables edge workloads to run close to machines and sensors, reducing latency and ensuring continued operations even during network disruptions, while maintaining centralized visibility and policy enforcement.

- IoT data processing; IoT environments generate massive volumes of sensor data that must be processed quickly. Anthos enables local data ingestion, filtering, and analytics at the edge, reducing bandwidth usage and cloud costs. Processed insights can then be securely sent to central systems for further analysis.

Benefits at the Edge

- Low latency: Edge deployments reduce the physical distance between applications and data sources, enabling faster response times. Anthos ensures workloads run close to users or devices, making it ideal for real-time applications that require immediate processing and minimal delay.

- Offline resilience: Edge environments may experience unreliable or intermittent network connectivity. Anthos allows applications to continue operating locally even when disconnected from the central cloud, ensuring business continuity and reliable operations until connectivity is restored.

- Centralized management: Anthos provides a centralized control plane to manage thousands of edge clusters consistently. Policies, updates, and configurations can be applied uniformly, reducing operational complexity and ensuring standardization across distributed edge environments.

- Secure remote operations: Anthos enables secure remote operations through identity-based access, encrypted communication, and centralized policy enforcement. This allows teams to manage edge environments safely without on-site intervention, reducing security risks and operational costs.

12. Cost Considerations in Anthos

Cost Model

Anthos pricing is based on:

- Number of vCPUs managed

- Infrastructure costs (compute, storage, networking)

- Optional add-ons

Cost Optimization Strategies

- Right-size clusters

- Use autoscaling

- Apply lifecycle policies

- Monitor unused resources

13. Advantages of Google Cloud Anthos

- True hybrid and multi-cloud support

Anthos enables applications to run consistently across on-premises data centers, Google Cloud, and other public clouds. This unified platform simplifies operations, reduces fragmentation, and allows organizations to adopt hybrid and multi-cloud strategies without changing application architectures or management tools. - Kubernetes-native platform

Built on upstream Kubernetes, Anthos uses open standards and APIs to orchestrate containerized workloads. This Kubernetes-native design ensures portability, scalability, and compatibility while enabling consistent deployment, scaling, and management of applications across all environments. - Strong security and governance

Anthos provides built-in security features such as identity-based access control, policy enforcement, mutual TLS, and secure software supply chains. Centralized governance ensures consistent security policies, compliance enforcement, and reduced risk across hybrid and multi-cloud infrastructures. - Vendor-neutral approach

Anthos avoids proprietary lock-in by relying on open-source technologies and Kubernetes standards. This vendor-neutral approach allows organizations to deploy workloads across different cloud providers, maintain flexibility, and make infrastructure decisions based on business needs rather than platform limitations. - Enterprise-grade observability

Anthos integrates advanced monitoring, logging, tracing, and alerting across all environments. This enterprise-grade observability provides deep visibility into application performance, reliability, and dependencies, enabling faster troubleshooting and proactive operations at scale. - Incremental modernization support

Anthos supports gradual application modernization, allowing organizations to modernize workloads step by step. Teams can rehost, replatform, or refactor applications incrementally, reducing migration risk and enabling continuous innovation without disrupting existing systems.

14. Challenges and Considerations

- Learning curve for Kubernetes and service mesh

- Initial setup complexity

- Requires cultural shift toward DevOps and GitOps

- Cost justification needed for small workloads

Anthos is best suited for medium to large enterprises with complex environments.

15. Anthos vs App Engine vs Cloud Run

Google Cloud Anthos, App Engine, and Cloud Run address very different application needs on Google Cloud.

Anthos is designed for large-scale, enterprise, hybrid and multi-cloud environments. It is Kubernetes-based and best suited for organizations that need consistent application management across on-premises, Google Cloud, and other clouds. Anthos offers strong governance, security, and flexibility but comes with higher operational complexity and cost.

App Engine is a fully managed PaaS that abstracts infrastructure completely. Developers focus only on writing code, while Google handles scaling, patching, and availability. It is ideal for traditional web applications and APIs but offers limited control over runtime and environment customization.

Cloud Run is a serverless container platform that runs stateless containers on demand. It provides rapid scaling, pay-per-use pricing, and full control over the container runtime. Cloud Run is best for microservices, APIs, and event-driven workloads without the overhead of managing Kubernetes.

| Feature | Anthos | App Engine | Cloud Run |

|---|---|---|---|

| Primary Use | Hybrid & multi-cloud | Managed web apps | Serverless containers |

| Control Level | High (Kubernetes) | Low | Medium |

| Infrastructure Mgmt | User + Google | Fully Google-managed | Fully Google-managed |

| Scaling | Manual / Auto (K8s) | Automatic | Automatic |

| Best For | Enterprises, complex systems | Simple web apps | Microservices & APIs |

16. Anthos vs Traditional Cloud Platforms

| Feature | Traditional Cloud | Anthos |

|---|---|---|

| Deployment | Single cloud | Hybrid & multi-cloud |

| Portability | Limited | High |

| Governance | Fragmented | Centralized |

| Security | Platform-specific | Unified |

| Kubernetes | Optional | Core |

17. Summary

Google Cloud Anthos is a powerful, enterprise-grade platform that enables organizations to run applications consistently across on-premises, cloud, and multi-cloud environments. Built on Kubernetes and open standards, Anthos delivers flexibility, security, and operational simplicity at scale.

By unifying infrastructure, application management, and security under a single platform, Anthos empowers enterprises to modernize confidently without sacrificing control or portability.

Sumit is the creator & author at pmacad.com, where he writes about project management, technology, and modern delivery practices. He brings over a decade of experience across technology projects and delivery leadership.